AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

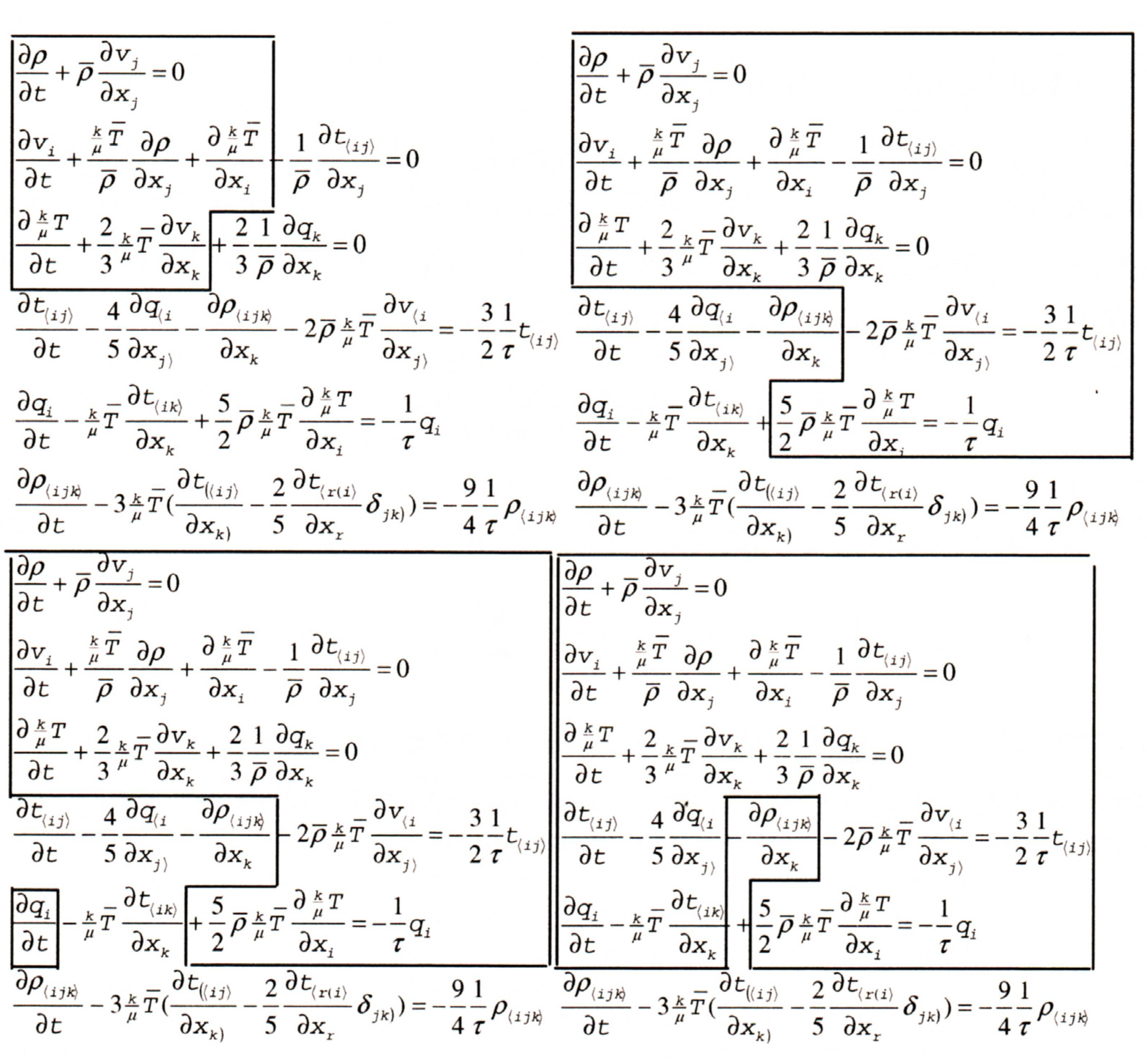

Entropy table8/17/2023

# r.mi_r <- apply( -r.mi, 2, rank, na.last=TRUE ) It is also widely mis-represented as a measure of 'disorder', as we discuss below. Ammonia Molecular weight : 17. Entropy is one of the most fundamental concepts of physical science, with far-reaching consequences ranging from cosmology to chemistry. Earlier the value of 185.3 J/molK was calculated from experimental data Giauque W.F., 1931. 0.0.9 8.1488 t s(V) 7648.0 0.130 75 2608.9 7. The calorimetric value is significantly higher than the statistically calculated entropy, 186.26 J/molK, which remains the best value for use in thermodynamic calculations Vogt G.J., 1976, Friend D.G., 1989, Gurvich, Veyts, et al., 1989. a vector with the same type and dimension as x. Often, as the machine learning model is being trained, the average value of this loss. If only x is supplied it will be interpreted as contingency table. # attributes(r.mi)$dimnames <- attributes(tab)$dimnames Cross-entropy loss is used for classification machine learning models. # Ranking mutual information can help to describe clusters Package entropy which implements various estimators of entropy EntropyHub (version 0. Ihara, Shunsuke (1993) Information theory for continuous systems, World Scientific. A Mathematical Theory of Communication, Bell System Technical Journal 27 (3): 379-423. Probability of character number i showing up in a stream of characters of the given "script". It is given by the formula H = - \sum(\pi log(\pi)) where \pi is the It is a scientific concept used in calculating and observing the uncertainty, disorder, randomness, or chaos seen in a system. The entropy of the whole of each contingency table is not used in computing the degrees of association of the variables, but it is a major entropy. Thermodynamics: Enthalpy, Entropy, Mollier Diagram and Steam Tables M08-005. The Shannon entropy equation provides a way to estimate the average minimum number of bits needed to encode a string of symbols, based on the frequency of the symbols. Entropy is defined as a thermodynamic quantity that is used to represent the amount of thermal energy of a given system that is not feasible for converting it into any productive work. A compact and simplified thermodynamics desk reference. In this context, the term usually refers to the Shannon entropy, which quantifies the expected value of the message's information. In information theory, entropy is a measure of the uncertainty in a random variable. )īase of the logarithm to be used, defaults to 2.įurther arguments are passed to the function table, allowing i.e. This online calculator computes Shannon entropy for a given event probability table and for a given message. Compressed liquid: from tables Saturated liquid (sf): from tables. If y is not NULL then the entropy of table(x, y. The value of entropy at a specified state is determined just like any other property. If only x is supplied it will be interpreted asĪ vector with the same type and dimension as x. )Ī vector or a matrix of numerical or categorical type. The mutual information is a quantity that measures the mutual dependence of the two random variables. The entropy quantifies the expected value of the information contained in a vector. \) The Standard Molar Entropies of Selected Substances at 298.Computes Shannon entropy and the mutual information of two variables.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed